Deploying Django with Docker Compose

There are a number of ways you can deploy a Django app.

One of the simplest is by running the app using Docker Compose directly on a Linux virtual machine.

The benefits to this approach are:

- Fastest and easier to get up and running.

- Define your deployment configuration with your code.

- Consistent development and production environments because you use the same Docker image.

However, the drawbacks of this approach are:

- It’s not super scalable because everything runs on a single server.

- Single point of failure.

- Manual deployment / update process.

You might want to use this if you want a low cost deployment solution for a simple app you built using Django.

If you need a more reliable and scalable option, you may want to take a look at our course: DevOps Deployment Automation with Terraform, AWS and Docker.

In this tutorial I’ll show you how to:

- Configure project for deployment.

- Handle media and static files.

- Run migrations.

- Push updates you your app.

Pre-requisites

Before you following this tutorial you’ll need the following:

- An AWS account with access to create a new EC2 instance (feel free to use another cloud provider as long as you’re confident in creating and configuring virtual machines).

- Docker Desktop installed on your machine.

- A code editor.

- A GitHub account.

- Knowledge of SSH key authentication (if you’re not familiar with it, there is a good guide on GitHub).

Create Django Project

We’re going to start by creating a Django project.

Setup Docker

To create a Django project, we’ll create a new directory for storing our project files (eg: deploy-django-with-docker-compose/)

In the root of the project create a .gitignore file, and include the contents provided by the GitHub template gitignore/Python.gitignore.

Then create a new file called requirements.txt and add the following contents:

Django>=3.2.3,<3.3We use this to install Django with the latest patch version which is 3.2.3 (or greater) but less than 3.3 which helps ensure security patches are installed while preventing Django being upgraded to a minor release which may contain breaking changes.

Create an empty directory called app/ inside our project which we’ll be using this to store our Django project.

Now create a filed called Dockerfile with the following contents:

FROM python:3.9-alpine3.13

LABEL maintainer="londonappdeveloper.com"

ENV PYTHONUNBUFFERED 1

COPY ./requirements.txt /requirements.txt

COPY ./app /app

WORKDIR /app

EXPOSE 8000

RUN python -m venv /py && \

/py/bin/pip install --upgrade pip && \

/py/bin/pip install -r /requirements.txt && \

adduser --disabled-password --no-create-home app

ENV PATH="/py/bin:$PATH"

USER app

This is a standard Dockerfile I use for my Django projects.

It has the following features:

- Based on Python 3.9 alpine image which is a lightweight base image for running Python apps. I pinned it to specific versions to improve stability and consistency of the steps in this tutorial.

- Added a maintainer label (feel free to change this).

- Set

PYTHONUNBUFFEREDto1so that Python outputs are sent straight to the container logs. - Copies the requirements.txt file to the image.

- Copies the the app/ directory to the image.

- Sets /app as our working directory when running commands from the Docker image.

- Exposes port 8000 which will be used for local development.

- Then we have a

RUNline which is broken into multiple lines. This helps keep our image layers small, because Docker creates a new layer for each line, but by formatting it like this only one line is created. - Creates a virtual environment in the image at /py.

- Upgraded pip to the latest version.

- Installs the dependencies defined in requirements.txt.

- Adds a new user called

appthat will be used to run our app - Adds our /py virtual environment to the

PATH(which means we can use it without specifying the full /py/bin/python path each time). - Sets the image user to app.

Add a new file called docker-compose.yml with the following contents:

version: '3.9'

services:

app:

build:

context: .

ports:

- 8000:8000

volumes:

- ./app:/appThis does the following:

- Uses Docker Compose syntax version 3.9.

- Creates a service called

app. - Sets the build context to the current directory.

- Maps port

8000on the host (your computer) to8000on the container. - Creates a volume which maps the app/ directory in our project to /app in the container which allows us to sync our code changes to the container running our dev server.

Create a file called .dockerignore, and add the following contents:

# Git

.git

.gitignore

# Docker

.docker

# Python

app/__pycache__/

app/*/__pycache__/

app/*/*/__pycache__/

app/*/*/*/__pycache__/

.env/

.venv/

venv/

# Local PostgreSQL data

data/This is a configuration file that excludes certain files and directories from the Docker build context.

It’s needed because there are a number of files that we don’t need to add to our Docker image.

Now, build the image by running:

docker-compose buildNote: If you get an error about the app/ directory not being found, ensure you added an empty directory called app/ inside your project.

The image should build successfully.

Create and Configure Django Project

Now run the following command to create a new Django project:

docker-compose run --rm app sh -c "django-admin startproject app ."This will create a new Django project in the app/ directory of our project.

Next we’ll update settings.py to pull configuration values from environment variables that we can set in our docker-compose.yml file, and also customise for deployment.

At the top of settings.py, import the os module by adding the following like:

import osThen, also inside settings.py, update the SECRET_KEY, DEBUG and ALLOWED_HOSTS lines to the following:

# SECURITY WARNING: keep the secret key used in production secret!

SECRET_KEY = os.environ.get('SECRET_KEY')

# SECURITY WARNING: don't run with debug turned on in production!

DEBUG = bool(int(os.environ.get('DEBUG', 0)))

ALLOWED_HOSTS = []

ALLOWED_HOSTS.extend(

filter(

None,

os.environ.get('ALLOWED_HOSTS', '').split(','),

)

)The os.environ.get('NAME') function will retrieve the value of an environment variable.

However, environment variables can only have one type (string).

In order to set config values of different types, we need to pass them through the appropriate function to convert them.

For example, for DEBUG we need a boolean, so we first pull in the DEBUG value (which should be set to 1 or 0), convert it to a integer using the int() function, then convert that to a boolean using the bool() function.

We provide the default of 0, so debug mode is always disabled by default, unless we override it by setting DEBUG=1. This is to reduce the risk of accidentally enabling debug mode in production which can be a security risk.

The ALLOWED_HOSTS settings is a security feature of Django which needs to contain a list of domain names that are allowed to access the app when debug mode is disabled.

It needs to be a list and, as we mention above, environment variable values always arrive as string, so we need to accept it as a comma separate list which we split up and append to the ALLOWED_HOSTS setting.

We pass it through the filter() function to filter out any None values that are passed in.

Then, add the following to the bottom of the app service in docker-compose.yml

environment:

- SECRET_KEY=devsecretkey

- DEBUG=1This configures the project to pull certain configuration values from environment variables.

The reason we do this is to provide a single place where we can customise our app, to follow the Twelve-Factor App model.

Add Database

Next we’re going to configure our app to use a database.

We’ll start by adding a new service to docker-compose.yml called db, which looks like this:

db:

image: postgres:13-alpine

environment:

- POSTGRES_DB=devdb

- POSTGRES_USER=devuser

- POSTGRES_PASSWORD=changemeThis will define a new service based on the postgres:13-alpine image from Docker Hub.

We use environment variables to set the database name (devdb), the root user (devuser) and the password (changeme). Don’t worry about changing these as they will only be used for our local development server. I’ll show you how to customise them on your deployment later.

Then tack this onto the end of the app service:

- DB_HOST=db

- DB_NAME=devdb

- DB_USER=devuser

- DB_PASS=changeme

depends_on:

- dbThis does the following:

- The first four lines are added to the

environmentblock which will set the database config so Django knows how to connect. These settings must match the settings we added under thedbservice. - The last

depends_online will ensure thedbservice starts beforeapp, and makesdbaccessible via the network from inside theappcontainers.

Next we need to modify our Dockerfile to install the PostreSQL client which will be used by the Psycopg2 driver that Django uses to access the database.

Update the RUN block in your Dockerfile to read the following:

RUN python -m venv /py && \

/py/bin/pip install --upgrade pip && \

apk add --update --no-cache postgresql-client && \

apk add --update --no-cache --virtual .tmp-deps \

build-base postgresql-dev musl-dev && \

/py/bin/pip install -r /requirements.txt && \

apk del .tmp-deps && \

adduser --disabled-password --no-create-home appThe above modification will do the following:

- Use

apkto install thepostgresql-client, which is needed by the driver Django uses to access PostgreSQL. - Install

build-base,postgresql-devandmusl-devwhich are all dependencies needed to install the driver usingpip. - We then install the contents of requirements.txt before deleting our temporary requirements to keep our image as small as possible.

Now add the following to the requirements.txt file to install the driver:

psycopg2>=2.8.6,<2.9Run docker-compose build to build our image with the latest changes.

Next we need to configure Django to connect to our database.

Inside app/app/settings.py, locate the DATABASES block and replace it with the following:

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.postgresql',

'HOST': os.environ.get('DB_HOST'),

'NAME': os.environ.get('DB_NAME'),

'USER': os.environ.get('DB_USER'),

'PASSWORD': os.environ.get('DB_PASS'),

}

}This will configure Django to use PostgreSQL with the credentials pulled from the environment variables.

Create model

Next we’ll create an app and add a model to test with by running the following:

docker-compose run --rm app sh -c "python manage.py startapp core"Now open app/app/settings.py and add update INSTALLED_APPS to the following:

INSTALLED_APPS = [

'django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'core',

]Open up app/core/models.py add the following:

from django.db import models

class Sample(models.Model):

attachment = models.FileField()This will create a model which contains an attachment which we can use to test handling media files.

Now register the new model in app/core/admin.py:

from django.contrib import admin

from core.models import Sample

admin.site.register(Sample)Now run the following command to generate the migrations for adding the new model:

docker-compose run --rm app sh -c "python manage.py makemigrations"Wait for database

There is a problem when using Django with a database running in Docker…

Even though adding the depends_on block to the app service ensures that the db service starts before the app, it doesn’t ensure that the database has been initialised.

This can lead to an issue where Django crashes because it tries to connect to the database before it’s fully up and running.

The solution is to add a Django management command that waits for the database, and run this command before starting the app.

Create two new empty files in the project at the following paths:

- app/core/management/__init__.py

- app/core/management/commands/__init__.py

Then create a new file at app/core/management/commands/wait_for_db.py and add the following contents:

"""

Django command to wait for the database to be available.

"""

import time

from psycopg2 import OperationalError as Psycopg2OpError

from django.db.utils import OperationalError

from django.core.management.base import BaseCommand

class Command(BaseCommand):

"""Django command to wait for database."""

def handle(self, *args, **options):

"""Entrypoint for command."""

self.stdout.write('Waiting for database...')

db_up = False

while db_up is False:

try:

self.check(databases=['default'])

db_up = True

except (Psycopg2OpError, OperationalError):

self.stdout.write('Database unavailable, waiting 1 second...')

time.sleep(1)

self.stdout.write(self.style.SUCCESS('Database available!'))This code snippet will add a Django command which runs an infinite loop that catches the exception which is thrown before the database is available.

As soon as the exception stops being thrown, we can assume the database is now available.

Handle migrations

Now we need to configure our app to run migrations and start the management server.

We can do this by updating docker-compose.yml by including the following command under the app service:

command: >

sh -c "python manage.py wait_for_db &&

python manage.py migrate &&

python manage.py runserver 0.0.0.0:8000"Now run the following:

docker-compose upThe server should start and if you head over to http://127.0.0.1:8000 you should see the Django placeholder landing page.

Handling Static and Media Files

One common challenge people face when working with Django is with handling static and media files.

When we deploy Django, we run it using a WSGI service.

WSGI means Web Service Gateway Interface, and it’s used to run Python code from a HTTP request.

Although WSGI can serve images and binary files, it’s not very efficient at it.

So the recommended approach is to put a reverse proxy in-front of the Django app which serves all requests starting with /static from the filesystem, and passes the rest to the WSGI service.

Popular options for the reverse proxy include Apache and nginx.

I prefer nginx because I find the documentation easy to understand and it works well with uWSGI.

Because we’re using Docker, we need to configure both Django and our Docker services to store static files in a shared volumes that is accessible by the reverse proxy service.

Configure Docker with Volumes

Open the Dockerfile and add && \ to the end of the RUN block, then add this:

mkdir -p /vol/web/static && \

mkdir -p /vol/web/media && \

chown -R app:app /vol && \

chmod -R 755 /volThis does the following:

- Creates a new directory at /vol/web.

- Sets the owner of that directory to our

appuser. - Ensures the

appuser has permissions to read, write and delete contents of that directory. - Tells Docker we want to expose a volume with the contents of /vol/web.

Next, open docker-compose.yml, locate the app service and update the volumes block to the following:

volumes:

- ./app:/app

- ./data/web:/vol/webThis will map a volume from app/data/ in our project to /vol/web on the container, so we can test it is working correctly before we setup our deployment.

If you’re using Git, add the following to your .gitignore to ensure any static or media files are not committed to your project:

/dataConfigure Django Media and Static Files

The next step is to tell Django to use this new volume for our static and media files.

In-case you don’t know the difference:

- Static – Files are assets used for our Django HTML content such as JavaScript, CSS and Image files.

- Media – Files which are dynamically added to our app at runtime, such as user uploaded data like profile pictures.

Open app/app/settings.py, locate the line that starts with STATIC_URL (near the bottom of the file) and replace it with the following:

STATIC_URL = '/static/static/'

MEDIA_URL = '/static/media/'

MEDIA_ROOT = '/vol/web/media'

STATIC_ROOT = '/vol/web/static'The first two lines that end with _URL are for configuring the URLs used by Django for static and media files.

All static file URLs will be automatically prefixed with /static/static/ and all media URLs are prefixed with /static/media/.

We can use this URL structure to configure our nginx reverse proxy to handle these URLs.

The last two lines which end with _ROOT set the location on the file system where these files will be stored.

The media files will be stored in /vol/web/media, and the static files will be stored in /vol/web/static.

The media files are added while the app is running (for example, if a user uploads a profile image) and the static files are generated during the deployment when we run the collectstatic command.

Now we need to modify our URLs to serve media files through our development server.

Open up app/app/urls.py and update it to this:

from django.contrib import admin

from django.urls import path

from django.conf.urls.static import static

from django.conf import settings

urlpatterns = [

path('admin/', admin.site.urls),

]

if settings.DEBUG:

urlpatterns += static(

settings.MEDIA_URL,

document_root=settings.MEDIA_ROOT,

)Above we are importing the static function (used to generate static URLs) and the settings module.

Then, if in DEBUG mode, we call the static function while passing in the MEDIA_URL and MEDIA_ROOT configurations, and append the output to the urlpatterns list.

This change is purely for serving files through our local development server, and isn’t used in the production deployment.

Test with Development Server

Now let’s test our project using the dev server.

We can test the handling of our media files by uploading a file using the Django admin.

First we need need create a superuser, by running the following command:

docker-compose run --rm app sh -c "python manage.py createsuperuser"Fill out the details when asked:

Then run the following command to start the server:

docker-compose upNavigate to http://127.0.0.1:8000/admin and login:

Once logged in, choose Add next to Samples to create a new model instance:

Then click Browse next to attachment and choose a file (any file will do, an image is best).

Then click SAVE, and the model should be created.

Select the instance of the sample model we just added:

Click on the link to the file you uploaded:

When you click it, you should see the image (or file) you uploaded displayed in the browser.

If you open up your VSCode editor, you should also see that the file is added inside data/web/media/:

If you see this, then everything is configured correctly.

Add Deployment Configuration

Next we are going to configure our project for deployment.

This will involve four things:

- Creating a reverse proxy using Docker and nginx.

- Adding a new Docker Compose configuration file for deployment.

Create the Reverse Proxy

Create a new directory called proxy/.

Then add a new file at proxy/uwsgi_params with the following contents:

uwsgi_param QUERY_STRING $query_string;

uwsgi_param REQUEST_METHOD $request_method;

uwsgi_param CONTENT_TYPE $content_type;

uwsgi_param CONTENT_LENGTH $content_length;

uwsgi_param REQUEST_URI $request_uri;

uwsgi_param PATH_INFO $document_uri;

uwsgi_param DOCUMENT_ROOT $document_root;

uwsgi_param SERVER_PROTOCOL $server_protocol;

uwsgi_param REMOTE_ADDR $remote_addr;

uwsgi_param REMOTE_PORT $remote_port;

uwsgi_param SERVER_ADDR $server_addr;

uwsgi_param SERVER_PORT $server_port;

uwsgi_param SERVER_NAME $server_name;This is a template taken from the official uWSGI docs on Nginx support.

The purpose of this file is to map some header values to the request being passed to uWSGI.

This is useful if you need to retrieve header information from your Django requests.

Next add a file at proxy/default.conf.tpl with the following contents:

server {

listen ${LISTEN_PORT};

location /static {

alias /vol/static;

}

location / {

uwsgi_pass ${APP_HOST}:${APP_PORT};

include /etc/nginx/uwsgi_params;

client_max_body_size 10M;

}

}This will be our nginx configuration file which does the following:

- Pulls in environment variable values for configuration (eg:

${LISTEN_PORT}will be replaced with theLISTEN_PORTvalue we assign later). - Creates a

serverblock that listens on the specified port. - Adds a location block to catch all URLs that start with

static/, and maps it to the volume at/vol/static– this is the part that serves the static and media files. - Adds a

locationblock for all other requests and usesuwsgi_passto forward it to our uWSGI service – because this is below thelocationblock for static files, it will catch all other requests. - Set the

client_max_body_sizeto10megabytes, which means we can upload files up to this size (increase as needed).

Create a file at proxy/run.sh with the following contents:

#!/bin/sh

set -e

envsubst < /etc/nginx/default.conf.tpl > /etc/nginx/conf.d/default.conf

nginx -g 'daemon off;'This is a shell script that we will use to start our proxy.

It does the following:

set -ewill make the script fail if any line fails, which is helpful for debugging.- The

envsubstline is a tool used to substitute environment variables in a file — this is what replaces the${EN_VAR}syntax with the actual value set in environment variables which we use for configuration. - The

nginxline starts the nginx server (daemon offtells it to run in the foreground, which is recommended for Docker because the log outputs are printed straight to the console output).

Finally we’ll pull these config files together by creating a file at proxy/Dockerfile with the following contents:

FROM nginxinc/nginx-unprivileged:1-alpine

LABEL maintainer="londonappdeveloper.com"

COPY ./default.conf.tpl /etc/nginx/default.conf.tpl

COPY ./uwsgi_params /etc/nginx/uwsgi_params

COPY ./run.sh /run.sh

ENV LISTEN_PORT=8000

ENV APP_HOST=app

ENV APP_PORT=9000

USER root

RUN mkdir -p /vol/static && \

chmod 755 /vol/static && \

touch /etc/nginx/conf.d/default.conf && \

chown nginx:nginx /etc/nginx/conf.d/default.conf && \

chmod +x /run.sh

VOLUME /vol/static

USER nginx

CMD ["/run.sh"]This does the following:

- Bases our image off nginxinc/nginx-unprivileged:1-alpine on Docker Hub — I use this image because (unlike the main nginx image) it doesn’t run nginx as the root user, which can be a security issue.

- Sets the

maintainerlabel (feel free to change this) - Copies the configuration files we created.

- Sets default environment variable values for

LISTEN_PORT,APP_HOSTandAPP_HOST— these can be overridden later if needed. - Switches to the

rootuser in order to manage files and configuration. - Runs some commands which create a new directory for our static volume, sets permissions, creates an empty file for default.conf, sets the ownership of this file to the

nginxuser (required so theenvsubstcan replace the file at runtime), makes the run.sh script executable. - Exposes

/vol/staticas a volume. - Switches back to the

nginxuser. - Runs the run.sh script.

Configure app for Deployment

Now we are going to finish configuring our Django app for deployment.

Create a new directory called scripts and a new file at scripts/run.sh with the following content:

#!/bin/sh

set -e

python manage.py wait_for_db

python manage.py collectstatic --noinput

python manage.py migrate

uwsgi --socket :9000 --workers 4 --master --enable-threads --module app.wsgiThis is the script that we will use to run our app using uWSGI.

It does the following:

- Runs

wait_for_dbto ensure the database is available before continuing to start the app. - Runs the

collectstaticcommand which will gather all the static files for our project and place them inSTATIC_ROOTso our nginx service can access them via the shared volume. - Runs the

migratecommand to run any database migrations. - Runs uWSGI on port

9000with 4 workers. The--masterflag is used to ensure the daemon runs in the foreground, so the log outputs are sent to the Docker console. The--enable-threadswill enable multi-threading in our app, and--module app.wsgitells uWSGI to use our wsgi module provided in side the app directory (the default one generated by Django).

Now we need to configure Docker to install uWSGI.

Update requirements.txt to contain the following line:

uWSGI>=2.0.19.1,<2.1This will add uWSGI to the list of packages we want to install in our Docker image.

To install uWSGI, we need to modify the RUN block in our Dockerfile to include the linux-headers as a temporary build dependency.

Update the Dockerfile to look like this:

FROM python:3.9-alpine3.13

LABEL maintainer="londonappdeveloper.com"

ENV PYTHONUNBUFFERED 1

COPY ./requirements.txt /requirements.txt

COPY ./app /app

COPY ./scripts /scripts

WORKDIR /app

EXPOSE 8000

RUN python -m venv /py && \

/py/bin/pip install --upgrade pip && \

apk add --update --no-cache postgresql-client && \

apk add --update --no-cache --virtual .tmp-deps \

build-base postgresql-dev musl-dev linux-headers && \

/py/bin/pip install -r /requirements.txt && \

apk del .tmp-deps && \

adduser --disabled-password --no-create-home app && \

mkdir -p /vol/web/static && \

mkdir -p /vol/web/media && \

chown -R app:app /vol && \

chmod -R 755 /vol && \

chmod -R +x /scripts

ENV PATH="/scripts:/py/bin:$PATH"

USER app

CMD ["run.sh"]This will make the following changes:

- Copies the scripts/ directory to our image (we can use this to add more helpers scripts if we need).

- Add

linux-headersto the temporary installation dependencies which is required to install uWSGI viapip. - Ensure everything in the /scripts directory is executable.

- Update the

PATHenvironment variable to include the/scriptsdirectory so we can call scripts without specifying the full path. - Add a

CMDline which will tell Docker to run our run.sh script when starting containers.

Next we will create our Docker Compose configuration specifically for deployment.

Add a new file in the root of the project (not the proxy/ subdirectory) called docker-compose-deploy.yml, with the following contents:

version: "3.9"

services:

app:

build:

context: .

restart: always

volumes:

- static-data:/vol/web

environment:

- DB_HOST=db

- DB_NAME=${DB_NAME}

- DB_USER=${DB_USER}

- DB_PASS=${DB_PASS}

- SECRET_KEY=${SECRET_KEY}

- ALLOWED_HOSTS=${ALLOWED_HOSTS}

depends_on:

- db

db:

image: postgres:13-alpine

restart: always

volumes:

- postgres-data:/var/lib/postgresql/data

environment:

- POSTGRES_DB=${DB_NAME}

- POSTGRES_USER=${DB_USER}

- POSTGRES_PASSWORD=${DB_PASS}

proxy:

build:

context: ./proxy

restart: always

depends_on:

- app

ports:

- 80:8000

volumes:

- static-data:/vol/static

volumes:

postgres-data:

static-data:This is quite a big one, so I’ll break the explanation down by each block, working from the bottom up because it allows for a more logical explanation.

volumes

This defines some named volumes that will be managed by Docker Compose.

It ensure the data can be persisted even after we remove the running containers, and makes reading/writing data efficient.

There are two volumes:

postgres-data– This will store our the data for our PostgreSQL database.static-data– This will store the static data and media files.

proxy

Here we define a service for our reverse proxy.

It has the following features:

- We set the build context to the proxy/ directory so proxy/Dockerfile is used instead of the app Dockerfile in the root directory.

- Sets

restart: always, which ensures the proxy will automatically restart if it crashes — useful for stability when running in production. - Set

appas a dependency usingdepends_on— this ensure that theappservice starts before theproxy, and creates a network so the app will be accessible from theproxycontainer using its hostname (app). - Maps port

80on the host to port8000on the container — this means we can receive requests via the default HTTP port (80). - Maps the

static-datavolume to /vol/static in the container.

db

This creates a database service similar to the one we use for our development server, except:

- We set

restarttoalwaysso the database we automatically restart if it crashes. - We map a volume for

postgres-datato persist the data in our database even if we remove our containers. - Sets environment variables using the

${ENV_NAME}syntax (more on this in a minute).

app

The app block defines our Django application.

It’s similar to our dev setup, but with the following changes:

restartis set to always to ensure the app restarts if it crashes.- Our /vol/web path in the container is mapped to the

static-datavolume so data is accessible by theproxy. - The environment variables include the

${ENV}syntax for settings values (more on this below).

You may notice above that we introduce a ${ENV} syntax when assigning environment variables.

This is a feature of Docker Compose which allows us to pull values from a file instead of hard coding them in our docker-compose-deploy.yml file.

We do this so we can store configuration values such as passwords and keys outside of our Git repo, which is recommended for security reasons.

All configuration values can be set on the server we deploy to by adding a file called .env.

It’s often useful to provide a template of this file with some dummy values that is commited to Git, so we know what values to set when deploying.

Create a new file called .env.sample in the root of the project and add the following contents:

DB_NAME=dbname

DB_USER=rootuser

DB_PASS=changeme

SECRET_KEY=changeme

ALLOWED_HOSTS=127.0.0.1This file should be included in your Git project and should only contain dummy values that need to be changed.

Now create a real .env file in the root of the project and paste in the same contents as the .env.sample file above (we’ll use this to test our deployment locally before actually deploying).

If you used the standard Python .gitignore file provided by GitHub, .env should already be excluded. Otherwise, add it to your .gitignore file now.

Now run the following command to test our deployment locally:

docker-compose -f docker-compose-deploy.yml down --volumes

docker-compose -f docker-compose-deploy.yml build

docker-compose -f docker-compose-deploy.yml upThe above commands do the following (in order):

- Clear any existing containers which may contain data from the dev services.

- Build the a new image for our app.

- Run the service.

Note: If you have any other application running on port 80, the server may fail to start. If this happens, either close the other application or temporarily change the port in docker-compose-deploy.yml.

This should start your server, and you should be able to browse to http://127.0.0.1/admin and view the login page (the base URL will return a 404 because our project doesn’t have any URL mappings).

Because we’ve removed our database and created a new one with a shared volume, we’ll need to re-create our superuser by running:

docker-compose -f docker-compose-deploy.yml run --rm app sh -c "python manage.py createsuperuser"When you’ve done that, you should be able to login and upload a Sample model with an attachment.

This time, the media file won’t be stored inside the data/web/ directory in the project, becuase it will be stored in the named volume we created called static-data.

However, the media should continue to work as it did before, but this time it’s being served from our proxy.

Deploy Project

Now we can do what we’ve all been waiting for: deploy our project to a server.

We will complete our deployment by doing the following:

- Creating a new virtual machine in AWS EC2.

- Adding a deploy key to our project in GitHub.

- Deploying the project to our virtual machine.

- Run an update.

Create AWS Account

For this step you’ll need an AWS acocunt.

If you don’t have one, you can create one using the AWS Free Tier.

Note: The resources we’ll be using in this tutorial should fall inside the free tier. However, I take no responsibility for any charges incurred on your account.

Also Note: Working with AWS comes with certain risks. It’s important that you follow best practices such as using an IAM user to access the consoles (instead of your root user) and also keep your credentials secure. I also recommend using MFA. However, these items are out of the scope of this tutorial.

Configure SSH Key

In order to connect to our virtual machine, we’ll need to add our SSH key to our account.

We don’t cover SSH auth in this guide because most readers will be familiar with it.

However, if you’re not sure please comment below and I’ll make a specific tutorial on it. Otherwise GitHub has a good explanation of it here.

Inside the AWS console, select Services and then EC2:

On the left menu, locate the Network & Security section, and choose Key Pairs:

On the Key pairs page, choose Actions and Import key pair:

On the Import key pair screen, give your key a name (usually your name with the device the key is stored on) and paste the contents of your public key into the large box provided.

Then click Import key pair:

Your key should appear in the list of imported key pairs.

Create EC2 Instance

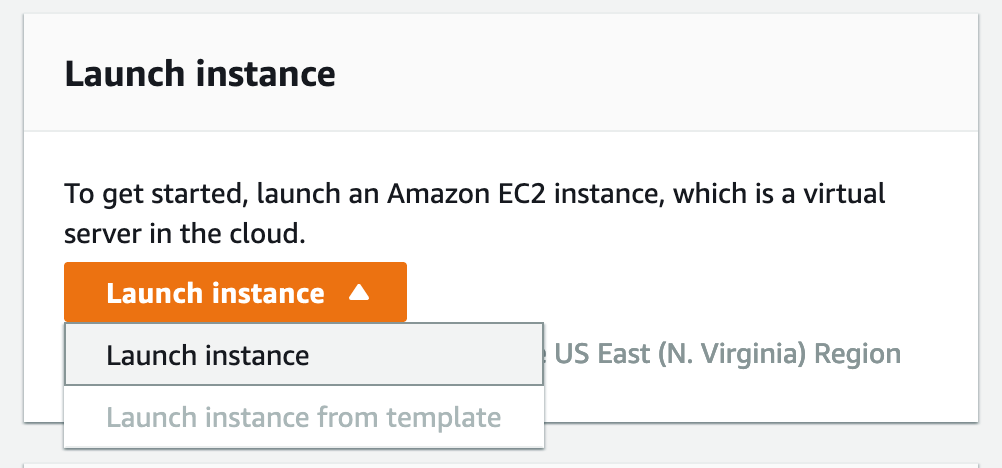

Click on EC2 Dashboard at the top of the left hand navigation.

On the EC2 page, choose Launch instance (about halfway down the page):

From the Choose an Amazon Machine Image (AMI) page, locate Amazon Linux 2 AMI and choose Select:

Note: As the label suggests, this is available in the free tier.

On the Step 2: Choose an Instance Type page, select the instance you wish to use and click Next: Configure Instance Details.

Important: AWS will charge you according to the type of image you select. The t2.micro option should be included in the free tier, so I suggest using this one for following this guide. However, keep in mind that it may not be powerful enough to serve a real production app to a number of users. If you have a real app, you may want to pay for a more powerful instance.

On Step 3: Configure Instance Details leave everything default and click Next: Add Storage.

On the Step 4: Add Storage page you can choose how much disk space will be available to your VM and click Review and Launch.

You will be charged for additional disk space that exceeds the amount given in the free tier. For this tutorial I recommend leaving it as 8GB, but if you are deploying a real app you may wish to add more for an additional cost.

On Step 7: Review Instance Launch select Edit security groups.

On the Step 6: Configure Security Group page select Add Rule, choose HTTP from the dropdown and click on Review and Launch.

We do this to ensure port 80 (HTTP) is accessible. This is what allows us to access the web application.

Now select Launch:

On the Select an existing key pair or create a new key pair, select the key you imported earlier. Then check the box acknowledging you have access to it, and click Launch Instance.

Your instance will now be launched!

Select View Instances to go to the instance page.

Choose our new instance from the list, and you should see more details appear below it.

Select the copy icon next to Public IPv4 DNS.

This is the address we can use to connect to our server.

Install Docker on Server

Open up Terminal (or on Windows PowerShell or GitBash) and connect to the VM by running:

ssh ec2-user@PUBLIC_DNS(Replace PUBLIC_DNS with with Public IPv4 DNS link copied in the previous step)

If prompted to add a fingerprint, type yes.

When logged in, you should see something like this:

Now it’s time to install our dependencies.

All we need on our server are:

- Git

- Docker

- Docker Compose

To install and configure them, run the commands below:

sudo yum install git -y

sudo amazon-linux-extras install docker -y

sudo systemctl enable docker.service

sudo systemctl start docker.service

sudo usermod -aG docker ec2-user

sudo curl -L "https://github.com/docker/compose/releases/download/1.29.1/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

sudo chmod +x /usr/local/bin/docker-compose

In order, each line does the following:

- Installs Git which we will use to clone our project to the server.

- Installs docker.

- Enabled the docker service so it starts automatically.

- Starts the docker service.

- Adds our

ec2-userto the docker group so it has access to run Docker containers. - Installs Docker Compose.

- Makes the Docker Compose binary executable

Once done, type exit to logout, and then connect to the server again using SSH (this is so the group permissions are applied to our user).

Setup Deploy Key

If your code is hosted on a public GitHub repo then this step isn’t required.

However, in most cases you will want to keep your code private, so we’ll use a deploy key to access it.

While connected to your server, run the following command:

ssh-keygen -t ed25519 -b 4096When prompted for the file to save the key, leave the input blank and hit enter (this will place it in the default location).

Leave the passphrase blank, or enter one if you prefer (just make sure you remember it, because you’ll need it to do deployments).

The key should be created:

This is the key that sites on the server that will be used to clone your GitHub project.

Output the contents of the public key by running the following:

cat ~/.ssh/id_ed25519.pubCopy the key string that is output to the screen (make sure you get it all):

If you haven’t already, now would be a good time to commit your latest code and push it to GitHub.

Head over to your GitHub project and choose Settings on the top menu:

On the left menu choose Deploy keys:

Choose Add deploy key.

Then enter a Title (eg: aws-deployment), paste the contents of your key inside the Key box and choose Add key (leave Allow write access blank):

You may need to enter your GitHub Password again for security reasons.

Deploy Project to Server

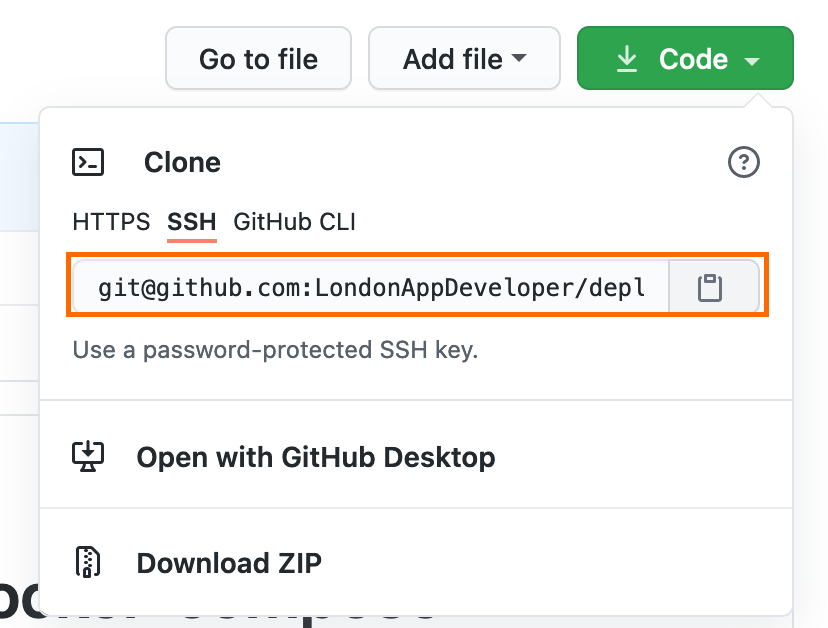

Open the repo page for your GitHub project and copy the SSH clone URL (use HTTPS for public projects).

Connect to your VM using SSH and run the following:

Clone the project by running:

git clone CLONE_URL(Replace CLONE_URL with the GitHub clone URL for your project).

Now use cd to change to your cloned project directory.

Then run the following:

cp .env.sample .envThis copies the .env.sample file to a new file called .env.

Now use your favourite editor (eg: nano or vi) to open your .env file, and update the entries to real values:

DB_NAME=appdb

DB_USER=rootuser

DB_PASS=ASecurePassword123

SECRET_KEY=AUniqueSecretKey

ALLOWED_HOSTS=ec2-54-227-111-191.compute-1.amazonaws.comNote: It’s important you set ALLOWED_HOSTS to the domain name you’ll be using to access your Django app. I’m going to use the Public IPv4 DNS address (which we also use for SSH) for testing. You can use multiple by separating them by commas like ALLOWED_HOSTS=one.example.com,two.example.com,three.example.com.

It’s a good idea to store a copy of this file in a secure location, in-case you lose the credentials.

Now you can start the project by running:

docker-compose -f docker-compose-deploy.yml up -dIf you open your servers DNS address, you should be able to view the admin page:

Because this is a new database, you’ll need to create a superuser by running:

docker-compose -f docker-compose-deploy.yml run --rm app sh -c "python manage.py createsuperuser"Login to the Django admin and create a new Sample model with an attachment to verify static files are working.

Pushing Updates

To deploy updates, follow these steps:

- Push the changes to GitHub.

- SSH to the server.

- Pull the changes using

git pull. - Run the following commands:

docker-compose -f docker-compose-deploy.yml build app

docker-compose -f docker-compose-deploy.yml up --no-deps -d appThis will rebuild the app container and load it without stopping the database or nginx proxy.

That’s how you deploy Django using Docker Compose.

I hope you found this useful. If you have any questions or if you think there is a better approach to any of the steps, leave them in the comments below so we can all learn from each other.

My volume sync works only one “pair” at a time. Either it works for ./app:/app OR ./data/web:/vol/web, but not together. I figured that out by taking off one entry at a time. Any suggestions?

Next, open docker-compose.yml, locate the app service and update the volumes block to the following:

volumes:

– ./app:/app

– ./data/web:/vol/web

I can’t upload static js files to data/web/ directory. I can’t see the folder of static, but only media folder

When we start developing the project in the development environment, is it not enough to do docker-compose up?

When I do this I get an authontication error

password authentication failed for user “devuser”

docker-django-app-db-1 | 2021-10-26 22:23:37.909 UTC [45] DETAIL: Role “devuser” does not exist.

This is 2 years later, but I ran into a similar issue. What I had to do was to connect manually to Postgres and then create the appropriate user and permission.

“`

# Connect to the app’s terminal

docker compose run -it app sh

# Connect to Postgres via the terminal

psql -U postgres -h db

# Inside Postgres, create the user and give it permission to log in

CREATE USER devuser WITH PASSWORD ‘changeme’;

ALTER ROLE devuser WITH LOGIN;

“`

Thanks for explanation, If I want proxy server to serve with https what should I do?

Have you tried geodjango with docker, I am trying to find the right setup for a geodjango app as it needs a bunch of spatial libraries to run.

Well done Sir, this is an awesome manual

Hello ,

first and utmost thanks for a great tutorial!

just one question- how do i serve the static files like css and js ? according to documentation online it says i need to create a static folder under the root folder and css js and images can go under it but i am not able to make it work . it does not show the static files or serve them at the moment. any idea?

Hey, no worries! You would serve them from static files. So they would go in the “static” dir of the app, and get collected with the `collectstatic` command.